TensorFlow Lite Tutorial Part 2: Speech Recognition Model Training

2020-02-11 | By ShawnHymel

License: Attribution Raspberry Pi SBC

In the previous tutorial, we downloaded the Google Speech Commands dataset, read the individual files, and converted the raw audio clips into Mel Frequency Cepstral Coefficients (MFCCs). We also split these features into training, cross validation, and test sets. Because we saved these feature sets to a file, we can read that file from disk to begin our model training.

In this tutorial, we will briefly go over how a convolutional neural network (CNN) works and how to train one using TensorFlow and Keras. We’ll save the model as a file on our hard disk so we can use it later for making predictions on real-time audio data.

We also cover this content in video format here:

Overview

There are a few different ways we can tackle training a mathematical model to classify spoken words. Initially, you might think of having it classify one of many different words.

When we deploy our model, it will always be listening. Each second of sound it hears, it will compute the MFCCs for that sound bite and send those MFCC features to the model to make a prediction. The model will perform a bunch of math and spit out the confidence (or probability level) that it thinks the MFCCs belong in each class.

In the example above, the model thinks the speaker said “stop” out of many different words. This is known as categorical classification. This might be useful for using several keywords, like “forward” and “backward” to control a robot, but it wastes many computational cycles to compute all those other probabilities when we only care about one word.

A more efficient model might look like this:

Here, we just care if the speaker said “stop” out of every other possible sound. All other sounds and words can simply be classified as “not stop.” This saves us a lot of computation, and it’s known as binary classification, as there are only two possible outcomes. The output of the model is simply a probablity (or conficence level) of how much it thinks the input features belong in the category "stop."

Convolutional Neural Network

A model is simply a collection of numbers and structure that applies some math between those numbers and some kind of input (more numbers!). In our case, we’re going to use a convolutional neural network, which is an extremely popular model for performing image classification.

This video from 3Blue1Brown is a great introduction to neural networks. For convolutional neural networks, I recommend checking out this article.

A convolutional neural network (CNN) is made up of 2 parts: a convolutional section and a classification section.

We will be starting with the convolutional neural network found in this GeeksforGeeks article. It is a fairly simple CNN such that we can conceptualize it easily and it will not require too many calculations to use. However, it seems to work reasonably well at classifying our sounds.

The convolutional section contains one or more layers of neural networks that perform the “convolution” operation on the input image. For our purposes, “convolution” refers to filtering the image to identify features in that image. An image filter is a window that moves across the whole image, taking a sample of pixels (2x2 pixels in the first layer for us) and multiplying those pixels by some weights and then taking the sum to create a pixel on the next image. This window is known as a kernel.

We have 32 nodes in the first convolutional layer, which means we end up with 32 smaller images after the first round of computations. These smaller images are filtered outputs of the input, and they often highlight fine details, such as edges and corners, of the original image.

After convolution, we perform the rectified linear unit (ReLU) activation function, which just converts any negative values to 0. This adds a level of non-linearity to the neural network, which allows us to train it for classification and feature extraction. See this article to learn more about ReLU activation.

We then perform a Max Pooling operation, where another window slides across each filtered image and drops all but the highest pixel value in that window. This helps keep the most important features and information about their approximate location (rather than their exact location in the image). It also helps reduce computational load for the next layers. This article has some great information on Max Pooling.

We repeat this Convolution-ReLU-Max Pooling process two more times to get a final set of 64 feature maps. With each successive convolutional layer, we train the network to learn more complex features. For example, the first layer might be edges and corners, but the final layer (on something like a network trained to identify dog breeds) might be “floppy ears” versus “pointed ears.”

These image features are then flattened into one, long vector (rather than being a collection of 2D matrices), which are fed into a fully-connected neural network for classification. In our example, the network is only 2 layers deep. There is one layer of 64 nodes with ReLU activations and another, single-node layer. Because we gave the final node the sigmoid activation function, it will give us a number between 0 and 1 that corresponds to the confidence the model has about the input image being MFCCs for the word “stop.”

We can set a threshold, such as 0.5, and we can say that any output over that threshold means the input image belonged to our target wake word.

You might notice that we also have a dropout layer between the two final layers in the model. A 0.5 dropout layer means that 50% of the node outputs from the previous layer are randomly ignored going into the next layer. This helps add some noise-like randomness in the network and can help with overfitting. See this article to learn more about dropout layers.

At this point, we’re ready to implement the neural network in code! Don’t worry if you do not fully grasp everything about CNNs. Right now, it’s important to know that they are popular for image classification, and we’re using a pre-made CNN to help classify the MFCC features from spoken words. You are very much encouraged to play around with some of the variables, like adding or removing layers/nodes in the neural network to see if it changes the performance at all.

Run Python Script to Train the Convolutional Neural Network

Navigate to https://github.com/ShawnHymel/tflite-speech-recognition and download the Jupyter Notebook and Python files. Start a Jupyter Notebook session on your computer and open 02-speech-commands-mfcc-classifier.

If you do not have one of the packages listed in the first cell (or throughout the Notebook), you can install it by running the following in its own cell:

!pip install <name of package>

Change the dataset_path variable to point to the Google Speech Commands dataset directory on your computer, and change the feature_sets_path variable to point to the location of the all_targets_mfcc_sets.npz file.

I recommend running the notebook one cell at a time to get an understanding for what’s happening.

We construct the CNN with the following lines of Keras code:

# Build model

# Based on: https://www.geeksforgeeks.org/python-image-classification-using-keras/

model = models.Sequential()

model.add(layers.Conv2D(32,

(2, 2),

activation='relu',

input_shape=sample_shape))

model.add(layers.MaxPooling2D(pool_size=(2, 2)))

model.add(layers.Conv2D(32, (2, 2), activation='relu'))

model.add(layers.MaxPooling2D(pool_size=(2, 2)))

model.add(layers.Conv2D(64, (2, 2), activation='relu'))

model.add(layers.MaxPooling2D(pool_size=(2, 2)))

# Classifier

model.add(layers.Flatten())

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dropout(0.5))

model.add(layers.Dense(1, activation='sigmoid'))We can then print out a summary of the neural network to get an idea of its shape and size, noting the number of nodes in each layer and the operation of each layer.

Take a look at the total number of parameters. This gives you an idea of how big the model will be, as it offers some insight into the number of weights, biases, and operations that need to be performed. The larger this number, the more computational cycles are required to train the model and make predictions. For deploying this model on an edge device, like a Raspberry Pi, we will want to make this number as small as possible while still keeping the model accurate.

When you get to the # Train cell, you will have the model ready to start the training process.

Note that the previous cell, we configured the model with a binary_crossentropy loss function, which is the equation the model will use to measure the difference between the predicted outcome and actual answer for each sample. This is very important in training! The binary_crossentropy loss function is specifically designed to help train models for binary classification.

We also add the rmsprop function as our optimizer. An optimizer is the algorithm used to change the weights and bias terms in a neural network so that it more accurately predicts answers on the next iteration. Popular optimizers include stochastic gradient descent (SGD), RMSProp, and Adam. This article offers a good introduction to these optimizers.

Finally, we tell the training procedure that we want to measure accuracy along the way by adding it to our metrics parameter. This will compare the model’s output to the known good values in the Y vector and give us a score of how often the model got the answer right.

When you run the # Train cell, you should ideally see the loss scores decrease and the accuracy scores increase each epoch, letting you know the model got better at making predictions.

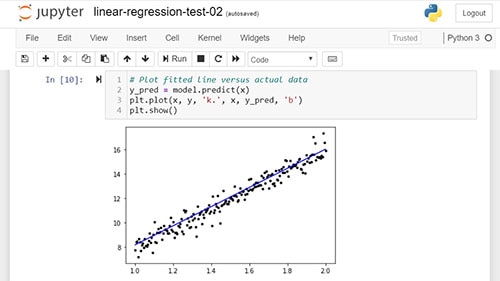

After training is finished (which can take up to an hour, from my experience), you can graph accuracy and loss values as a function of time (measured in epochs).

Ideally, you should see the accuracy increase over time and converge on some value and the loss value decrease over time and converge on some value.

Remember how we set aside a validation set during feature extraction? After each epoch, the model’s performance is evaluated on the validation set to create a measure of validation loss and validation accuracy.

The accuracy and loss values are determined from the training set data. In theory, the validation and training accuracy/loss should be the same. However, you’ll often find that the model performs better on the training data than the validation data. If that gap is too wide, the model is said to be suffering from overfitting. Overfitting means that the model learned features unique to the training set and does not perform well on unseen data. There are a number of ways to tackle overfitting, but for now, you should just be aware that it can occur--watch for a large gap between training accuracy and validation accuracy in your results.

Note that you can run the training process again to get slightly different results. The initial weights and biases of the network are randomly chosen, so where the model ends up could be somewhat random.

Once you are happy with the results, we use the save_model command in Keras to save the neural network as a .h5 file.

If you would like to try having the model make a prediction on one sample, you can use the model.predict() function. Just remember that you have to give it MFCCs from a 1-second clip of audio.

We can also evaluate the model on our test set using model.evaluate(). This function has the model perform a series of predictions and compares the output to the known answers stored in the y_test vector.

It looks like our model performed with an accuracy of 98.4% on the test set!

While that might seem high, remember that of all the words we trained with, only about 4% of them were “stop.” That means, if the model simply guessed “not stop” for every audio clip, it would be right 96% of the time! Our model needs to do better than 96% on unseen data to be useful.

MFCC calculations, model shape and size, number of epochs, etc. are considered hyperparameters. These are values that are set prior to training and are not automatically updated during training. Feel free to play around with these hyperparameters to see if you can get the model to be any more accurate!

Going Further

Now that we have a fully trained model, we’re ready to deploy it on our target device. If you’d like to dig deeper into convolutional neural networks, here are some resources that might help you:

Recommended Reading

TensorFlow Lite Tutorial Part 1: Wake Word Feature Extraction

TensorFlow Lite Tutorial Part 3: Speech Recognition on Raspberry Pi