Edge AI Anomaly Detection Part 4 - Machine Learning on ESP32 via Arduino

2020-06-22 | By ShawnHymel

License: Attribution Arduino

In the previous tutorial, we deployed both of our machine learning algorithms (Mahalanobis Distance and Autoencoder) to a Raspberry Pi. While it worked, we determined that neither model was very robust. Moving the accelerometer or fan (even slightly) would require us to re-train and re-deploy the model(s).

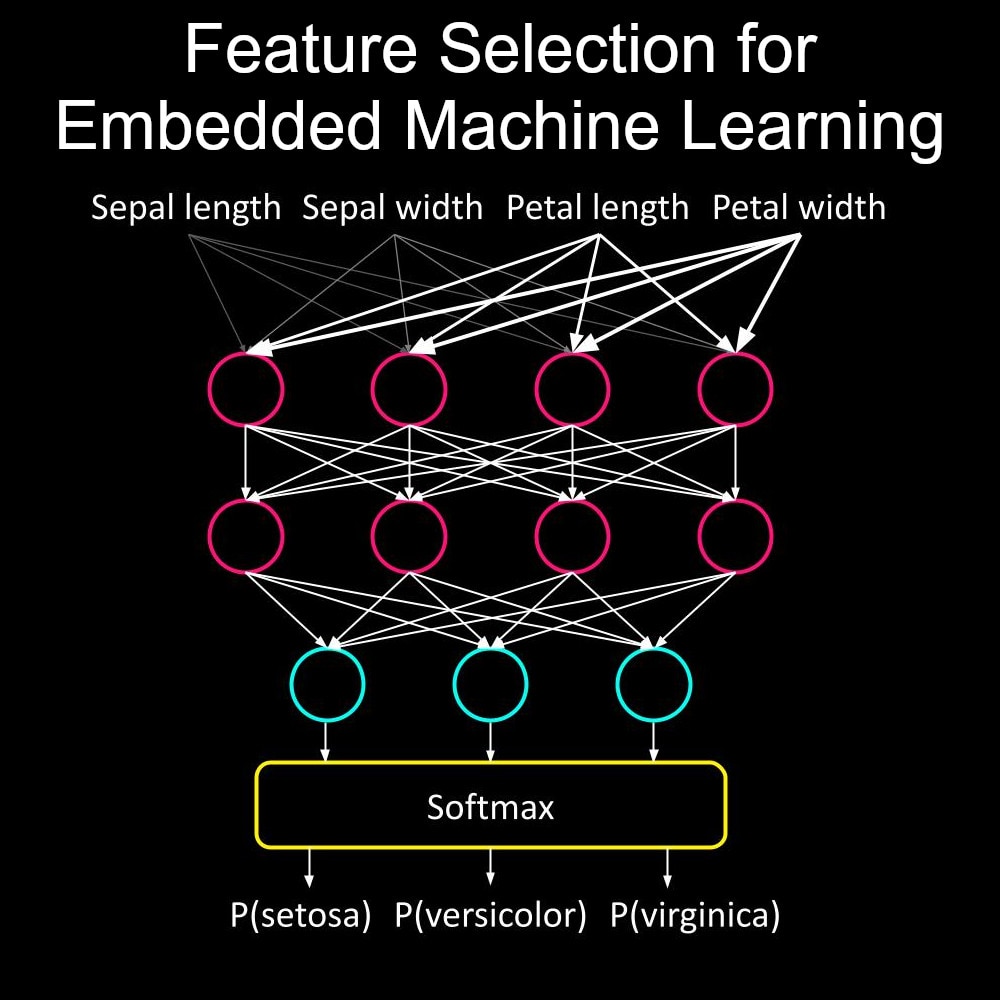

To fix that, we’re going to first try training a more robust model in this tutorial. Then, we’re going to deploy models to an ESP32 using Arduino. For the Mahalanobis Distance, we’ll need to develop the prediction calculations manually in C. For the Autoencoder, we can use the Arduino TensorFlow Lite library to perform inference.

See here for a video version of this tutorial:

Training a More Robust Model

Let’s try to fix the problem we found last time! While training a model to work for a system in one particular state (position, environment, location, etc.) works, it’s probably not ideal for most applications. To remedy that, we can try to create a more robust model.

Ideally, the new model will capture all the possible states of a system while it’s running normally. Anything outside of this will be an “anomaly.” Unfortunately, this is difficult to do: the more robust a model, the more it starts to think that everything is “normal.” If you run into a situation where a model is not working, you might have to go back to the drawing board and re-examine which features and types of models to use.

Another option is to train a model for each state a system can be in. This might work if you have distinct states (e.g. an engine with set RPM modes) but not if it’s a continuously changing state (e.g. a continuous variable transmission).

Follow all of the steps in the first tutorial (again). However, this time, you’ll want to move the base of the fan around a little each minute or so during collection. This allows the samples to represent the fan being in several different states. Once again, we’ll consider low speed to be “normal.”

From there, repeat the steps in the second tutorial to train new Mahalanobis Distance and Autoencoder models.

What you’ll likely find is that there is no longer a clear separation between normal and anomaly samples (for both the Mahalanobis Distance and Autoencoder methods). With some overlap, it will be trickier to choose a threshold.

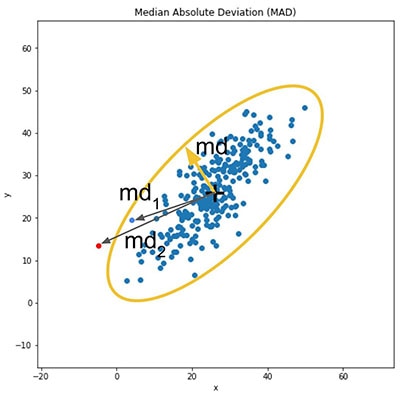

Here is the outputs of the validation normal and anomaly sets for the Mahalanobis Distance (blue is normal, red is anomaly):

Here is the outputs of the normal and anomaly test sets for the Autoencoder:

We’ll want to include as much of the normal as possible without falsely triggering our anomaly alarm. Based on the diagrams above, I chose the following thresholds for deployment:

Mahalanobis Distance Threshold: 1.0

Autoencoder Threshold: 2e-05

As you can probably guess based on these plots, the Autoencoder will work better than the Mahalanobis Distance when using a more robust model. This is because the neural network is better at finding features that characterize the system better than other statistical methods (such as the mean).

Converting Mahalanobis Distance to C

The next step is to convert our prediction code from Python to C. As we do not have access to many of the wonderful high level libraries in C, like Numpy and SciPy, we’ll have to do things manually. First, we’ll save our models as C header files. Then, we’ll save a few samples for testing. We’ll use these samples to develop our algorithms for detecting anomalies.

Head to the code repo here: https://github.com/ShawnHymel/tinyml-example-anomaly-detection

In utils/, you should see c_writer.py. Take a look at this Python program. It is a couple of functions that help us generate C code (specifically, header files). We will use it in our conversion programs to save our models and example samples in arrays.

Run mahalanobis_distance/anomaly-detection-md-conversion.ipynb. Feel free to change the settings to load the correct .npz model file and save the .h files where you wish. The script will first compute the inverse covariance matrix and save it along with the mean from the original model as a C array in a separate header file.

Next, the script will convert one normal sample and one anomaly sample (saved as normal_anomaly_samples.npz) to separate header files, which we can use for testing.

Finally, we want to develop a set of manual calculations to compute the median, median absolute deviation (MAD), matrix multiplication, and Mahalanobis distance (MD). These will be translated to C (stored as functions in the utils/utils.h and utils/utils.c).

To develop these functions, we will use our normal and anomaly samples to compare the output of each intermediate calculation (between the Python and C implementations).

Open the Arduino IDE and load mahalanobis_distance/esp32_test_md.ino. You’ll want to copy the newly generated .h files containing the model and samples to your sketch folder. You should see the model file, samples, and utils as separate tabs. Run this program, and it will read the samples, calculate the MAD and Mahalanobis Distance between each sample and the model’s mean.

Compare the output of each intermediate calculation to the ones we got in Python:

Once we’re sure that our C functions give us the same output as the Python calculations (using Numpy, SciPy, TensorFlow and other libraries), we can develop our final deployment code.

Converting the Autoencoder to C

Because we’ll use the TensorFlow Lite for Microcontrollers Arduino library, we won’t need to develop as many manual calculation functions for the Autoencoder as we did for the MD. However, you’ll still want to run the Notebook autoencoder/anomaly-detection-tflite-conversion.ipynb to convert the model.

In that Notebook, we first create a TensorFlow Lite (.tflite) model out of the .h5 Keras model file. We then generate a C array that is stored in a header file consisting of the raw FlatBuffer model.

We’ll use the same normal and anomaly samples generated in the MD conversion file, so we’ll skip that step here.

Finally, we use those same normal and anomaly samples to generate MAD values, run inference with our model, and compute the mean squared error (MSE). We will compare these outputs to the outputs in Arduino to make sure they match.

Run the Arduino sketch found in autoencoder/esp32_test_tflite/esp_test_tflite.ino on your ESP32. Don’t forget to copy the generated .h files over to your sketch folder! When you open the Serial monitor, you should see the output of the MAD calculations, autoencoder, and MSE for both normal and anomaly samples.

Make sure they line up with the same computations in Python:

Once we’re sure that inference is working properly and our calculations are coming out correctly in C, we’re ready to create our deployment code!

Hardware Hookup

We’re going to connect a piezo buzzer to pin A1 on the ESP32 Feather. Everything is going to be done locally, so we won’t be using the WiFi at all for deployment!

Mahalanobis Distance Deployment

Once you’re satisfied that the C code is working, open mahalanobis_distance/esp32_deploy_md/esp32_deploy_md.ino in Arduino. Copy your utils.h, utils.c, and model (.h) file to the sketch folder. Adjust the threshold, and you’re ready to go! Upload the sketch to the ESP32.

Tape (or otherwise attach) the ESP32, accelerometer, and buzzer to your fan. Try to put it back in the same spot you collected samples from.

When you begin running the sketch with the fan running on low, nothing should happen. If you hit the fan blades or shake the fan base, the piezo buzzer should sound.

From my experimentation, the MD method (with the new robust model) does not work very well. I could only create an anomaly by violently shaking the fan! So, let’s try the autoencoder.

Autoencoder Deployment

Just like we did with the MD sketch, open autoencoder/esp32_deploy_tflite/esp32_deploy_tflite.ino. Copy the utils and model.h files into the sketch directory. Adjust the threshold as necessary (this may require tweaking once you deploy the system).

Attach the sensor node to the fan and let it run. You should notice that the model is more robust: you can gently move the fan base around, and no alarm should sound. However, tap the fan blades or tape a few coins to one blade (to offset the motor), and the buzzer should begin to loudly chirp.

Try running the fan on high or turning it off to see if those modes count as anomalies!

Resources and Going Further

I hope this project has given you some ideas on how to create anomaly detection systems for your own projects! It’s certainly not perfect, but it should work as a decent starting point.

All of the code for this project can be found here: https://github.com/ShawnHymel/tinyml-example-anomaly-detection

Here is the math behind the Mahalanobis Distance (and the Python code that I used as the basis for my C implementation): https://www.machinelearningplus.com/statistics/mahalanobis-distance/

Here’s another great article on autoencoders: https://blog.keras.io/building-autoencoders-in-keras.html

Recommended Reading

Edge AI Anomaly Detection Part 2 - Feature Extraction and Model Training

Edge AI Anomaly Detection Part 3 - Machine Learning on Raspberry Pi