TinyML: Getting Started with STM32 X-CUBE-AI

2020-07-20 | By ShawnHymel

License: Attribution

STM32 X-CUBE-AI is a set of libraries and plugins for the STMicroelectronics CubeMX and STM32CubeIDE systems. It is a piece of the STM32Cube.AI framework released a few years ago as part of ST’s push into the realm of Edge AI and TinyML.

In the previous tutorial, I showed you how to run TensorFlow Lite for Microcontrollers on an STM32. While TensorFlow Lite is open source and runs on a wide variety of embedded systems, the X-CUBE-AI is a proprietary library that runs on only STM32 variants. However, as we’ll see in this tutorial, X-CUBE-AI is faster and more space efficient than TensorFlow Lite.

I’ll walk you through the process of setting up a simple X-CUBE-AI project and running inference on a pre-trained neural network. Note that you will need an STM32 processor (ARM Cortex-M series), and at least a Cortex-M3 or Cortex-M4. I will use a Nucleo-L432KC for this demo.

You can check out this tutorial in the video.

Overview

Microcontrollers are often very limited in resources compared to desktops, laptops, and servers (and even smartphones!). As a result, we generally need to perform model training on a separate computer before moving that model to our microcontroller.

X-CUBE-AI supports models trained with TensorFlow, Keras, PyTorch, Caffe and others. Regardless of what you use to train the model, the model file needs to be in Keras (.h5), TensorFlow Lite (.tflite), or ONNX (.onnx) format.

Once the model is loaded onto the microcontroller, we can write some code to perform inference. For example, if we trained a model to classify cats in a photo, we can feed the model new, unseen photos and it should (in theory) tell us whether or not there is a cat in that photo.

We will be using STM32CubeIDE for this tutorial. Please see this video for a crash course on how to use it, if you are not already familiar with it.

Model Training

We will use a model trained in a previous tutorial. Please follow the steps in this tutorial to generate a model in .h5, .tflite, and .h formats. Download all three files to your computer. Note that we will only need the .tflite version, but it is helpful to have all three as backups (or for trying out other TinyML frameworks).

Install X-CUBE-AI

In STM32CubeIDE, click Help > Manage embedded software packages. In the pop-up window, select the STMicroelectronics tab. Click the drop-down arrow by X-CUBE-AI and check the most recent version of the Artificial Intelligence package (version 5.1.0 for me). Click Install Now.

When it’s done downloading, accept the license agreement and close out of the package manager window.

Project Configuration

Click File > New > STM32 Project. If you just chose to install the X-CUBE-AI package, you will have to wait the first time while the project manager finishes installing it, which could take a few minutes.

In the Target Selection window, click on the Board Selector tab and search for your development board (“Nucleo-L432KC” for me). Select your board in the Board List.

Click Next. Give your project a name and leave the other options as default (we can use C with X-CUBE-AI).

Click Finish. Select Yes if asked to initialize peripherals in their default modes and Yes again to open the CubeMX perspective.

In CubeMX, enable one of your timers (I’ll use Timer 16), and set the prescaler so that it ticks every microsecond (80 - 1 = 79 for an 80 MHz system clock). Set the AutoReload register with the maximum value (65535 for a 16-bit timer).

Click on the Additional Software sub-tab. In that window, check the Artificial Intelligence component class. Click the drop-down arrows to expose all of the options. Check Core under X-CUBE-AI. Leave Application set as Not selected. Note that you can select an application template if you would like to see how ST recommends you to create a machine learning program.

You also have the option of uploading a System Performance or a Validation program if you would like to use the validation checker later. But for now, let’s just create a project with no application selected.

Click OK.

In the left (Categories) pane, drop down the Additional Software category and select STMicroelectronics.X-CUBE_AI. Make sure that it is activated (checked) in the Mode pane. In the Configuration pane, click Add network. Select TFLite for the network type and click Browse. Find and load your sine_model.tflite model file that you downloaded earlier. Give your model a name, like sine_model. Note that this name affects some of the function names later on.

If you scroll down in that pane and click the Analyze button, you can get an overview of your neural network model. This can be helpful for figuring out how much memory is required to store it and how many multiply-and-accumulate (MAC) operations are needed.

Validate on Desktop runs some tests with your model to make sure that inference works. Validate on Target runs tests on your microcontroller, which needs to be running the Validation program found in the Additional Software window. Feel free to experiment with these options, but we won’t cover them here. The Show Graph button gives you a graphical depiction of your neural network, much like the Netron program would.

If you click the gear icon in your model tab, you can assign additional external flash and RAM to assist you with inference. Once again, we won’t go into that here.

Note that by running the X-CUBE-AI library, the cyclic redundancy check (CRC) hardware peripheral will be enabled by default.

Head to the Clock Configuration tab. Under PLL Source Mux, change the input to the high speed internal clock, labeled HSI. For HCLK (MHz), enter the value “80.” Press ‘enter,’ and the CubeMX software should adjust all of the prescalers to give you an 80 MHz system clock.

Click File > Save and Yes when asked to generate code.

You should see a new X-CUBE-AI library appear in your project. In the App folder, you can find a set of source code files with <model_name>.[hc] and <model_name>_data.[hc]. These will contain your model in raw byte format along with functions needed to configure and use your model for inference.

I have run into some issues with CubeMX wanting to endlessly generate code when you try to build your project. As a result, I highly recommend closing the .ioc file (i.e. close the tab in the IDE) when you are done with CubeMX.

Code

Open main.c. Take a look at the main.c code I’ve copied below. You will need to write the sections that occur between the user header guards (e.g. /* USER CODE BEGIN … */). Note that the auto-generated code (outside of these header guards) may change if you are using a different microcontroller.

/* USER CODE BEGIN Header */

/**

******************************************************************************

* @file : main.c

* @brief : Main program body

******************************************************************************

* @attention

*

* <h2><center>© Copyright (c) 2020 STMicroelectronics.

* All rights reserved.</center></h2>

*

* This software component is licensed by ST under BSD 3-Clause license,

* the "License"; You may not use this file except in compliance with the

* License. You may obtain a copy of the License at:

* opensource.org/licenses/BSD-3-Clause

*

******************************************************************************

*/

/* USER CODE END Header */

/* Includes ------------------------------------------------------------------*/

#include "main.h"

/* Private includes ----------------------------------------------------------*/

/* USER CODE BEGIN Includes */

#include <stdio.h>

#include "ai_datatypes_defines.h"

#include "ai_platform.h"

#include "sine_model.h"

#include "sine_model_data.h"

/* USER CODE END Includes */

/* Private typedef -----------------------------------------------------------*/

/* USER CODE BEGIN PTD */

/* USER CODE END PTD */

/* Private define ------------------------------------------------------------*/

/* USER CODE BEGIN PD */

/* USER CODE END PD */

/* Private macro -------------------------------------------------------------*/

/* USER CODE BEGIN PM */

/* USER CODE END PM */

/* Private variables ---------------------------------------------------------*/

CRC_HandleTypeDef hcrc;

TIM_HandleTypeDef htim16;

UART_HandleTypeDef huart2;

/* USER CODE BEGIN PV */

/* USER CODE END PV */

/* Private function prototypes -----------------------------------------------*/

void SystemClock_Config(void);

static void MX_GPIO_Init(void);

static void MX_USART2_UART_Init(void);

static void MX_CRC_Init(void);

static void MX_TIM16_Init(void);

/* USER CODE BEGIN PFP */

/* USER CODE END PFP */

/* Private user code ---------------------------------------------------------*/

/* USER CODE BEGIN 0 */

/* USER CODE END 0 */

/**

* @brief The application entry point.

* @retval int

*/

int main(void)

{

/* USER CODE BEGIN 1 */

char buf[50];

int buf_len = 0;

ai_error ai_err;

ai_i32 nbatch;

uint32_t timestamp;

float y_val;

// Chunk of memory used to hold intermediate values for neural network

AI_ALIGNED(4) ai_u8 activations[AI_SINE_MODEL_DATA_ACTIVATIONS_SIZE];

// Buffers used to store input and output tensors

AI_ALIGNED(4) ai_i8 in_data[AI_SINE_MODEL_IN_1_SIZE_BYTES];

AI_ALIGNED(4) ai_i8 out_data[AI_SINE_MODEL_OUT_1_SIZE_BYTES];

// Pointer to our model

ai_handle sine_model = AI_HANDLE_NULL;

// Initialize wrapper structs that hold pointers to data and info about the

// data (tensor height, width, channels)

ai_buffer ai_input[AI_SINE_MODEL_IN_NUM] = AI_SINE_MODEL_IN;

ai_buffer ai_output[AI_SINE_MODEL_OUT_NUM] = AI_SINE_MODEL_OUT;

// Set working memory and get weights/biases from model

ai_network_params ai_params = {

AI_SINE_MODEL_DATA_WEIGHTS(ai_sine_model_data_weights_get()),

AI_SINE_MODEL_DATA_ACTIVATIONS(activations)

};

// Set pointers wrapper structs to our data buffers

ai_input[0].n_batches = 1;

ai_input[0].data = AI_HANDLE_PTR(in_data);

ai_output[0].n_batches = 1;

ai_output[0].data = AI_HANDLE_PTR(out_data);

/* USER CODE END 1 */

/* MCU Configuration--------------------------------------------------------*/

/* Reset of all peripherals, Initializes the Flash interface and the Systick. */

HAL_Init();

/* USER CODE BEGIN Init */

/* USER CODE END Init */

/* Configure the system clock */

SystemClock_Config();

/* USER CODE BEGIN SysInit */

/* USER CODE END SysInit */

/* Initialize all configured peripherals */

MX_GPIO_Init();

MX_USART2_UART_Init();

MX_CRC_Init();

MX_TIM16_Init();

/* USER CODE BEGIN 2 */

// Start timer/counter

HAL_TIM_Base_Start(&htim16);

// Greetings!

buf_len = sprintf(buf, "\r\n\r\nSTM32 X-Cube-AI test\r\n");

HAL_UART_Transmit(&huart2, (uint8_t *)buf, buf_len, 100);

// Create instance of neural network

ai_err = ai_sine_model_create(&sine_model, AI_SINE_MODEL_DATA_CONFIG);

if (ai_err.type != AI_ERROR_NONE)

{

buf_len = sprintf(buf, "Error: could not create NN instance\r\n");

HAL_UART_Transmit(&huart2, (uint8_t *)buf, buf_len, 100);

while(1);

}

// Initialize neural network

if (!ai_sine_model_init(sine_model, &ai_params))

{

buf_len = sprintf(buf, "Error: could not initialize NN\r\n");

HAL_UART_Transmit(&huart2, (uint8_t *)buf, buf_len, 100);

while(1);

}

/* USER CODE END 2 */

/* Infinite loop */

/* USER CODE BEGIN WHILE */

while (1)

{

// Fill input buffer (use test value)

for (uint32_t i = 0; i < AI_SINE_MODEL_IN_1_SIZE; i++)

{

((ai_float *)in_data)[i] = (ai_float)2.0f;

}

// Get current timestamp

timestamp = htim16.Instance->CNT;

// Perform inference

nbatch = ai_sine_model_run(sine_model, &ai_input[0], &ai_output[0]);

if (nbatch != 1) {

buf_len = sprintf(buf, "Error: could not run inference\r\n");

HAL_UART_Transmit(&huart2, (uint8_t *)buf, buf_len, 100);

}

// Read output (predicted y) of neural network

y_val = ((float *)out_data)[0];

// Print output of neural network along with inference time (microseconds)

buf_len = sprintf(buf,

"Output: %f | Duration: %lu\r\n",

y_val,

htim16.Instance->CNT - timestamp);

HAL_UART_Transmit(&huart2, (uint8_t *)buf, buf_len, 100);

// Wait before doing it again

HAL_Delay(500);

/* USER CODE END WHILE */

/* USER CODE BEGIN 3 */

}

/* USER CODE END 3 */

}

/**

* @brief System Clock Configuration

* @retval None

*/

void SystemClock_Config(void)

{

RCC_OscInitTypeDef RCC_OscInitStruct = {0};

RCC_ClkInitTypeDef RCC_ClkInitStruct = {0};

RCC_PeriphCLKInitTypeDef PeriphClkInit = {0};

/** Initializes the CPU, AHB and APB busses clocks

*/

RCC_OscInitStruct.OscillatorType = RCC_OSCILLATORTYPE_HSI;

RCC_OscInitStruct.HSIState = RCC_HSI_ON;

RCC_OscInitStruct.HSICalibrationValue = RCC_HSICALIBRATION_DEFAULT;

RCC_OscInitStruct.PLL.PLLState = RCC_PLL_ON;

RCC_OscInitStruct.PLL.PLLSource = RCC_PLLSOURCE_HSI;

RCC_OscInitStruct.PLL.PLLM = 1;

RCC_OscInitStruct.PLL.PLLN = 10;

RCC_OscInitStruct.PLL.PLLP = RCC_PLLP_DIV7;

RCC_OscInitStruct.PLL.PLLQ = RCC_PLLQ_DIV2;

RCC_OscInitStruct.PLL.PLLR = RCC_PLLR_DIV2;

if (HAL_RCC_OscConfig(&RCC_OscInitStruct) != HAL_OK)

{

Error_Handler();

}

/** Initializes the CPU, AHB and APB busses clocks

*/

RCC_ClkInitStruct.ClockType = RCC_CLOCKTYPE_HCLK|RCC_CLOCKTYPE_SYSCLK

|RCC_CLOCKTYPE_PCLK1|RCC_CLOCKTYPE_PCLK2;

RCC_ClkInitStruct.SYSCLKSource = RCC_SYSCLKSOURCE_PLLCLK;

RCC_ClkInitStruct.AHBCLKDivider = RCC_SYSCLK_DIV1;

RCC_ClkInitStruct.APB1CLKDivider = RCC_HCLK_DIV1;

RCC_ClkInitStruct.APB2CLKDivider = RCC_HCLK_DIV1;

if (HAL_RCC_ClockConfig(&RCC_ClkInitStruct, FLASH_LATENCY_4) != HAL_OK)

{

Error_Handler();

}

PeriphClkInit.PeriphClockSelection = RCC_PERIPHCLK_USART2;

PeriphClkInit.Usart2ClockSelection = RCC_USART2CLKSOURCE_PCLK1;

if (HAL_RCCEx_PeriphCLKConfig(&PeriphClkInit) != HAL_OK)

{

Error_Handler();

}

/** Configure the main internal regulator output voltage

*/

if (HAL_PWREx_ControlVoltageScaling(PWR_REGULATOR_VOLTAGE_SCALE1) != HAL_OK)

{

Error_Handler();

}

}

/**

* @brief CRC Initialization Function

* @param None

* @retval None

*/

static void MX_CRC_Init(void)

{

/* USER CODE BEGIN CRC_Init 0 */

/* USER CODE END CRC_Init 0 */

/* USER CODE BEGIN CRC_Init 1 */

/* USER CODE END CRC_Init 1 */

hcrc.Instance = CRC;

hcrc.Init.DefaultPolynomialUse = DEFAULT_POLYNOMIAL_ENABLE;

hcrc.Init.DefaultInitValueUse = DEFAULT_INIT_VALUE_ENABLE;

hcrc.Init.InputDataInversionMode = CRC_INPUTDATA_INVERSION_NONE;

hcrc.Init.OutputDataInversionMode = CRC_OUTPUTDATA_INVERSION_DISABLE;

hcrc.InputDataFormat = CRC_INPUTDATA_FORMAT_BYTES;

if (HAL_CRC_Init(&hcrc) != HAL_OK)

{

Error_Handler();

}

/* USER CODE BEGIN CRC_Init 2 */

/* USER CODE END CRC_Init 2 */

}

/**

* @brief TIM16 Initialization Function

* @param None

* @retval None

*/

static void MX_TIM16_Init(void)

{

/* USER CODE BEGIN TIM16_Init 0 */

/* USER CODE END TIM16_Init 0 */

/* USER CODE BEGIN TIM16_Init 1 */

/* USER CODE END TIM16_Init 1 */

htim16.Instance = TIM16;

htim16.Init.Prescaler = 80 - 1;

htim16.Init.CounterMode = TIM_COUNTERMODE_UP;

htim16.Init.Period = 65536 - 1;

htim16.Init.ClockDivision = TIM_CLOCKDIVISION_DIV1;

htim16.Init.RepetitionCounter = 0;

htim16.Init.AutoReloadPreload = TIM_AUTORELOAD_PRELOAD_DISABLE;

if (HAL_TIM_Base_Init(&htim16) != HAL_OK)

{

Error_Handler();

}

/* USER CODE BEGIN TIM16_Init 2 */

/* USER CODE END TIM16_Init 2 */

}

/**

* @brief USART2 Initialization Function

* @param None

* @retval None

*/

static void MX_USART2_UART_Init(void)

{

/* USER CODE BEGIN USART2_Init 0 */

/* USER CODE END USART2_Init 0 */

/* USER CODE BEGIN USART2_Init 1 */

/* USER CODE END USART2_Init 1 */

huart2.Instance = USART2;

huart2.Init.BaudRate = 115200;

huart2.Init.WordLength = UART_WORDLENGTH_8B;

huart2.Init.StopBits = UART_STOPBITS_1;

huart2.Init.Parity = UART_PARITY_NONE;

huart2.Init.Mode = UART_MODE_TX_RX;

huart2.Init.HwFlowCtl = UART_HWCONTROL_NONE;

huart2.Init.OverSampling = UART_OVERSAMPLING_16;

huart2.Init.OneBitSampling = UART_ONE_BIT_SAMPLE_DISABLE;

huart2.AdvancedInit.AdvFeatureInit = UART_ADVFEATURE_NO_INIT;

if (HAL_UART_Init(&huart2) != HAL_OK)

{

Error_Handler();

}

/* USER CODE BEGIN USART2_Init 2 */

/* USER CODE END USART2_Init 2 */

}

/**

* @brief GPIO Initialization Function

* @param None

* @retval None

*/

static void MX_GPIO_Init(void)

{

GPIO_InitTypeDef GPIO_InitStruct = {0};

/* GPIO Ports Clock Enable */

__HAL_RCC_GPIOC_CLK_ENABLE();

__HAL_RCC_GPIOA_CLK_ENABLE();

__HAL_RCC_GPIOB_CLK_ENABLE();

/*Configure GPIO pin Output Level */

HAL_GPIO_WritePin(LD3_GPIO_Port, LD3_Pin, GPIO_PIN_RESET);

/*Configure GPIO pin : LD3_Pin */

GPIO_InitStruct.Pin = LD3_Pin;

GPIO_InitStruct.Mode = GPIO_MODE_OUTPUT_PP;

GPIO_InitStruct.Pull = GPIO_NOPULL;

GPIO_InitStruct.Speed = GPIO_SPEED_FREQ_LOW;

HAL_GPIO_Init(LD3_GPIO_Port, &GPIO_InitStruct);

}

/* USER CODE BEGIN 4 */

/* USER CODE END 4 */

/**

* @brief This function is executed in case of error occurrence.

* @retval None

*/

void Error_Handler(void)

{

/* USER CODE BEGIN Error_Handler_Debug */

/* User can add his own implementation to report the HAL error return state */

/* USER CODE END Error_Handler_Debug */

}

#ifdef USE_FULL_ASSERT

/**

* @brief Reports the name of the source file and the source line number

* where the assert_param error has occurred.

* @param file: pointer to the source file name

* @param line: assert_param error line source number

* @retval None

*/

void assert_failed(uint8_t *file, uint32_t line)

{

/* USER CODE BEGIN 6 */

/* User can add his own implementation to report the file name and line number,

tex: printf("Wrong parameters value: file %s on line %d\r\n", file, line) */

/* USER CODE END 6 */

}

#endif /* USE_FULL_ASSERT */

/************************ (C) COPYRIGHT STMicroelectronics *****END OF FILE****/Please refer to the video if you would like an explanation of what each section of code does.

Add printf Float Support

In STM32CubeIDE, printf (and variants, like sprintf) does not support floating point values by default. To enable support, head to Project > Properties > C/C++ Build > Settings > Tool Settings tab > MCU G++ Linker Miscellaneous. In the Other flags pane, add the following line:

-u_printf_float

Do this for both Debug and Release configurations.

Save your code. Click Project > Build Project.

Run in Debug Mode

Click Run > Debug. Agree to open the debug perspective.

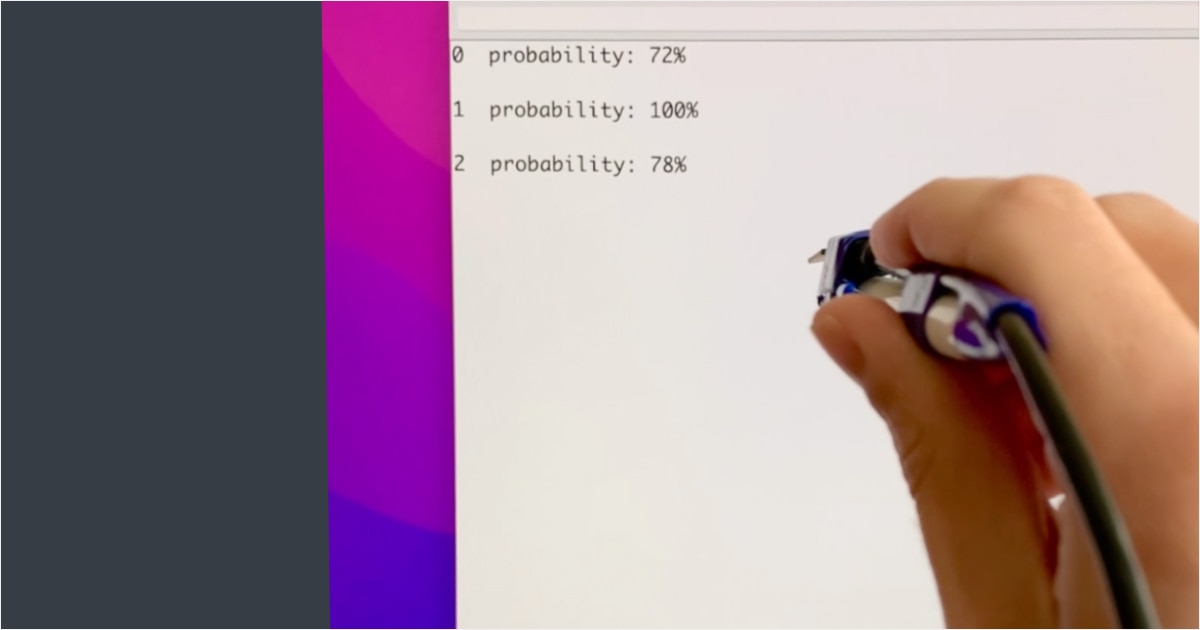

Click the Play button to start running code on your microcontroller. Open a serial terminal to your microcontroller (using a program such as PuTTY). You should see the output of inference, which should predict the value of sin(2.0). Additionally, it should print out how long it took to perform inference, which was about 78 microseconds in my case.

Run in Release Mode

Creating a release configuration can sometimes save on code size and speed up runtime, as it uses different compiler optimizations and leaves off the -DDEBUG flag (some code snippets exist only when this flag is present to help with debugging an application).

Click Project > Build Configurations > Set Active > Release. Click Project > Build Project.

When that’s done, click Run > Run Configurations. In that window, in the left pane, click on the New Launch Configuration button, which should create a new configuration named <project name> Release.

In the Main tab, select Search Project and select your <project name>/Release/<project name>.elf file from the bottom pane. Click OK. Select Release for your Build Configuration. Click Apply.

Click Run. This should re-build your project. If it encounters any issues, click Run > Run to try again.

This is a good time to look at the memory requirements for your application. In the Console, find the output of the arm-none-eabi-size tool. Add text + data to get the estimated flash usage, and add data + bss to get the estimated RAM usage.

When it’s done, your program should start running automatically. Open the serial terminal. The output of the inference engine should be the same, but you might see a slight increase in inference speed (77 microseconds for me this time).

Comparison to TensorFlow Lite for Microcontrollers

The previous tutorial showed how we can use TensorFlow Lite for Microcontrollers with the same neural network model to perform inference. There are some differences between the two frameworks, as outlined in this graphic:

As you can see, X-CUBE-AI is faster and takes up less memory than TensorFlow Lite. However, it is closed source and works only on STM32 processors, which might be a showstopper for some people.

Going Further

I hope that this has helped you get started with the X-CUBE-AI package for the STM32 line! I found it easier to use and faster than TensorFlow Lite, but it is less portable to other systems. Here are some reading material if you would like to learn more about it: