Use Infrared, Time-of-Flight Imaging to Enhance Robotic Capabilities

Contributed By DigiKey's North American Editors

2016-05-19

Imaging is critical for many robotic applications, allowing them to perform basic tasks, avoid obstacles, navigate, and ensure basic safety. The obvious way to provide imaging is with a low-cost video camera, or better again, using two of them for stereo vision and depth perception. But the latter has a few drawbacks.

Using dual cameras for 3-D imaging increases power consumption and space requirements while complicating the form factor and manufacturing process and increasing cost. As 3-D imaging goes mainstream in applications spanning basic "assist" units to autonomous vehicles, designers need a better alternative than simply adding more cameras.

To that end, designers are increasingly using alternatives, which offer advantages in packaging, cost, power consumption, data reduction, and overall performance. Among the alternatives are time-of-flight (ToF) imaging systems (often called Light Detection and Ranging, or LIDAR). These can be complemented by infrared (IR) imaging, often called thermography.

Start with IR

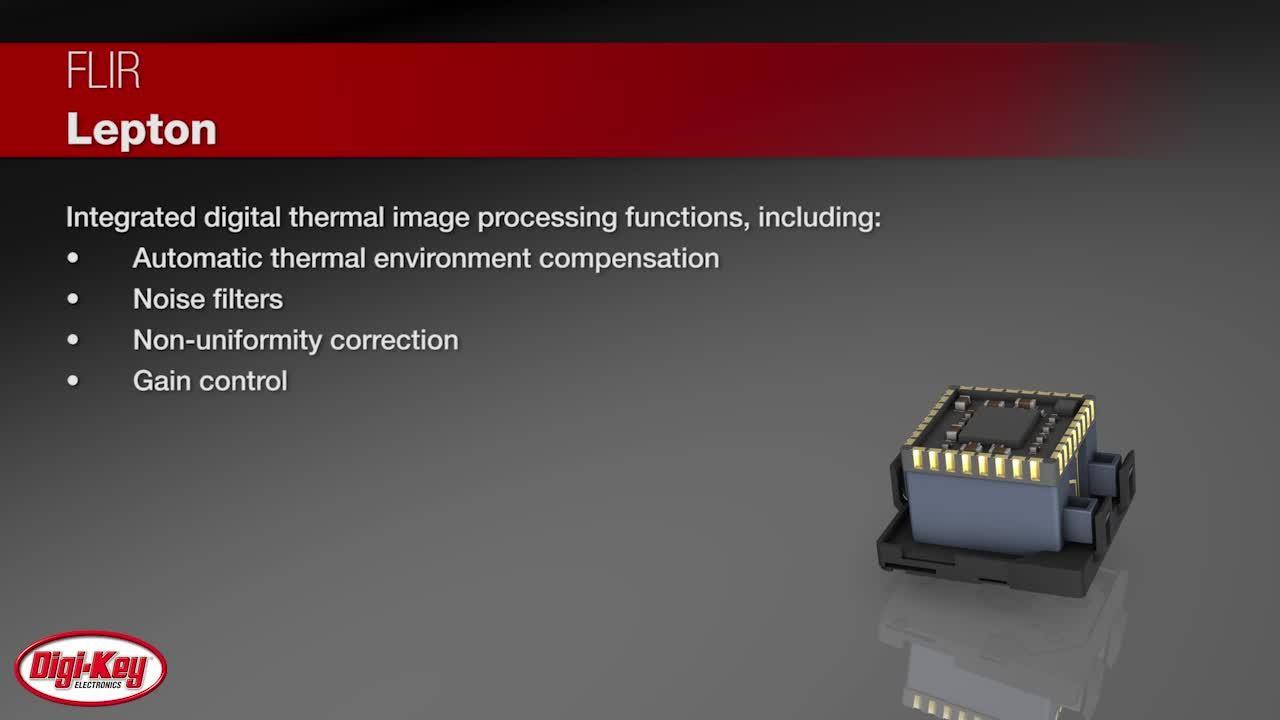

Infrared electromagnetic waves have wavelengths which are longer than those at the red end of the visible spectrum; wavelengths of the IR band are generally considered to range from 700 nm (0.7 μm) to 1 mm (1000 μm). In simplest terms, they represent heat radiated from an object. With an appropriate IR imaging system, this IR "heat map" is transformed into a visible light image, often with false color added to highlight the relative temperatures (Figure 1).

Figure 1: An infrared image of water pouring from a spout into a tub; note use of "false color" to better convey the temperature differences. (Image courtesy of FLIR Systems, Inc.)

Note that IR imaging is not the same as IR sensing. Sensing is a non-contact method used to detect and even measure a heat source, such as a person passing in front of a passive infrared (PIR) sensor as part of an alarm system, or excessive heat from a pipe being monitored; there is no image-like detail or image resolution.

Why use IR instead of, or in addition to, conventional visible-light imaging? There are several reasons:

- IR is valuable when the objects of interest blend into their background, whether as deliberate concealment or just coincidence

- IR can help locate people or warm-blooded animals in the field of vision

- IR is also helpful when looking for common faults such as an overheated pipe, steam line, smoldering fire, or an electrical malfunction, which can cause localized heating

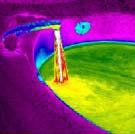

Implementing an IR imaging subsystem is now greatly simplified due to highly integrated, high-performance components with easy-to use interfaces. Take for example the Lepton IR camera from FLIR Systems, Inc., (Figure 2). It is approximately 10 × 12 × 6 mm deep, integrates a fixed-focus lens assembly, an 80 × 60 pixel long-wave infrared (LWIR) microbolometer sensor array for 8 to 14 μm IR, and signal-processing electronics.

Figure 2: The FLIR Lepton imager (shown without socket), is a highly integrated unit which includes signal processing and user-programmable features. (Image courtesy of FLIR Systems, Inc.)

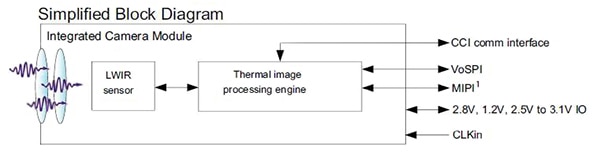

Interconnection is simplified through the use of industry-standard MIPI and SPI video interfaces, and the two-wire I2C-like serial-control interface (Figure 3). Despite its small size and ease of use, the Lepton unit features high performance, with a fast time-to-image of under 0.5 sec, and thermal sensitivity of less than 50 mK. Operating power is also low, at 150 mW (typical).

Figure 3: This simplified block diagram of the Lepton unit shows its integral image-processing unit in addition to the basic sensor array, as well as its simple interface to the user's system. (Image courtesy of FLIR Systems, Inc.)

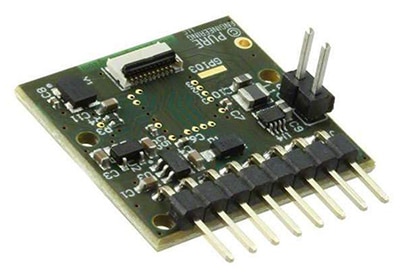

Products such this benefit from hands-on user experience, so FLIR offers a breakout board for the camera module which is compatible with ARM-based evaluation boards and the Raspberry Pi (Figure 4). This 25 mm × 24 mm board requires a single 3 V to 5.5 V supply, and includes a 25 MHz system clock, internal LDOs for additional low-noise power rails, and standard interconnect headers, as well as a 32-pin Molex header for the Lepton module itself.

(a)

(b)

Figure 4: The Lepton breakout board (a) (shown minus Lepton unit), allows designers to evaluate and program the unit using various evaluation boards due to its standard connector and interface (b). (Images courtesy of FLIR Systems, Inc.)

ToF takes flight

For many applications, a thermal image is useful but insufficient, and a visible-light imaging system is needed, often in 3-D. The obvious solution is to get a standard video camera, as these are now available from dozens of vendors in many resolutions, light sensitivities, sizes, and interface options. If stereo imaging is required, use a pair of them.

Unlike thermography which has been available for decades but with cruder performance and higher cost and power dissipation than today's units, ToF is relatively new. It was first proposed as an academic concept in the 1990s, yet the requisite components and processing capabilities which make it practical have only been available in the past decade.

ToF is often the preferred imaging approach for many applications, including autonomous vehicles (self-driving cars), and has literally been road tested for millions of miles. (In terms of architecture, a smart or autonomous vehicle is a specialized type of robotic system on wheels, with sensors, algorithms, and defined actions; it's just a matter of perspective.) The ToF approach actually has some distinct advantages compared to a conventional imaging camera (discussed below).

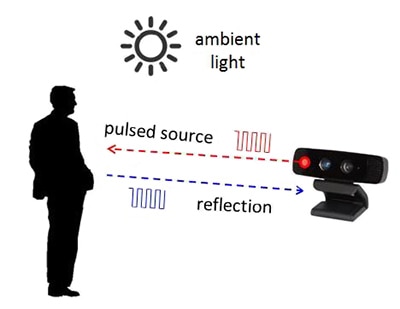

How it works: Unlike conventional camera imaging, where the operating principles are generally understood by engineers and are somewhat intuitive, ToF is less well known and relies on equations as much as sensed photons for implementation. Its two key components are a precisely controlled and modulated light source, which is either a solid-state laser or an LED (usually operating in the 850 μm near-IR range and so invisible to the human eye), and a pixel array to "see" the reflections of the emitted light from the scene being imaged (Figure 5).

Figure 5: The high-level concept of ToF is simple: project a pulsed light at the scene, and capture the reflected light pulses and their timing; actual implementation is much more complicated but is now practical. (Image courtesy of Texas Instruments)

To fully understand the ToF principle requires equations which define its operation and also to account for some unavoidable error sources and then compensate for them. The overall ToF process can be done in two ways: the light source is either repeatedly pulsed at low duty cycle, or it is modulated by a continuous sine or square wave source. If pulsed mode is used, the reflected light energy is sampled using two out-of-phase windows, and these samples are used to compute the distance to the target. If continuous mode is used, the sensor takes four samples per measurement with each sample offset by 90⁰, and the phase angle between the illumination and reflection – and thus the distance – can be computed.

The output of a ToF sequence and calculations is a cloud of points representing the imaged areas, thus the term "point cloud."[1]

Relative pros and cons: Deciding between one or two-camera conventional imaging and a ToF approach involves tradeoffs centered on the specifics of the application. For basic inspection and defect recognition, where the object is known in advance and the objective is feature extraction/comparison within a controlled lighting environment, then a single camera providing a 2-D image is often the best choice. However, if the lighting varies, ToF may be better, as it is less affected by ambient light variations.

For 3-D imaging using two conventional cameras, the decision has more dimensions beginning with mechanical and mounting issues. Even if these are not concerns, the system which processes the image must have robust algorithms to solve the "correspondence" problem of matching a point in the scene from one camera with the same point in the scene from the second camera. Doing so requires a substantial amount of color or grey-scale variation, and depth accuracy is often limited due to a uniform surface of the scene being imaged. In contrast, a ToF system is less affected by mechanical issues as well as lighting and contrast concerns, and does not require image-correspondence matching for a 3-D result.

Due to its fast response time and ability to handle wide ranges in subject characteristics, as well the nature of the point cloud it generates, ToF systems are well suited for translating gestures of the hands, face, or body into commands, as well as capturing surroundings in autonomous vehicles. However, solutions using conventional single or two camera setups have lower cost, due to the wide availability of many basic imaging cameras.

Implementation: A ToF system has five major functional blocks:

- Light source: a component to generate the carefully timed light pulses

- Optics: A lens to focus the light on the sensor; it will likely also have an optical bandpass filter to reduce ambient-light "noise"

- Image sensor: This captures the reflections of the emitted light from the scene being illuminated

- Management electronics: controls and synchronizes the illumination unit and the image sensor

- Computation unit and interface: calculates distance based on timing of illumination versus returned and sensed photons

Selecting the light emitter and the imaging sensor are the first steps in configuring a ToF system. An emitter choice is a diode such as the Vishay VSMY1850X01, an 850 μm IR device designed for high-speed operation. It supports 10 nsec rise and fall times when driven by 100 mA, making it a good fit for the pulse mode.

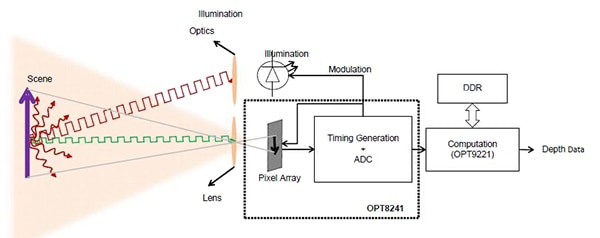

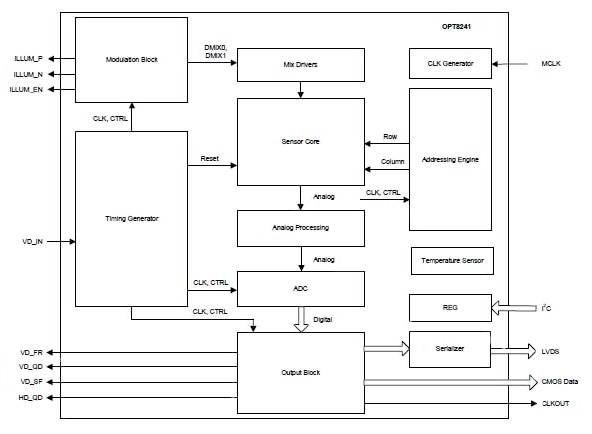

The sensor or pixel array is the heart of the ToF system, and is now available as part of a larger, more integrated device such as Texas Instruments OPT8241 Time-of-Flight sensor/controller IC (Figure 6). It contains the sensor source (item 3 above), and control electronics, (item 4), as seen in its simplified block diagram (Figure 7), and provides digitized reflection data to a coprocessor such as the OPT9221 Time-of-Flight Controller, which computes the depth data from the digitized sensor data. The OPT9221 also implements various correction functions including antialiasing, nonlinearity compensation, and temperature compensation.

Figure 6: Using the TI OPT8241 in conjunction with the OPT9221 computation engine, designers can build a ToF system with modest hardware effort and relatively few components.

Figure 7: The simplified internal block diagram of the OPT8241 shows some of the complexity it uses to implement the ToF front end; it includes modulation control and driver for the illumination LED. (Image courtesy of Texas Instruments)

As with any video system which is designed to not only capture a scene but to do so in a consistent and useful way, ToF software design is not trivial. TI offers a detailed user's guide[2], as well as an estimator tool which enables designers assess the performance and interplay of the parameters such as depth resolution, 2-D resolution (number of pixels), distance range, frame rate, field of view (FoV), ambient light, and reflectivity of the object.

Conclusion

Designers of robotic systems have always been challenged by how to provide a detailed "sense of surrounding". Fortunately, today's designers have access to high-performance, powerful, and relatively low-cost technologies including infrared imaging, conventional video cameras, and even LIDAR based on time-of-flight principles. As a result, and due to their low overall power consumption, many complete designs use a combination of these approaches to overcome the shortcomings of any single technique and so provide a fuller, multidimensional picture.

References:

- "Time-of-Flight Camera - An Introduction," Texas Instruments

- "Introduction to the Time-of-Flight (ToF) System Design," Texas Instruments

Disclaimer: The opinions, beliefs, and viewpoints expressed by the various authors and/or forum participants on this website do not necessarily reflect the opinions, beliefs, and viewpoints of DigiKey or official policies of DigiKey.